What Is LLMO? The Complete Beginner's Guide to AI Citation Optimization (2026)

目次

Key Takeaways: 5 Things to Understand About LLMO

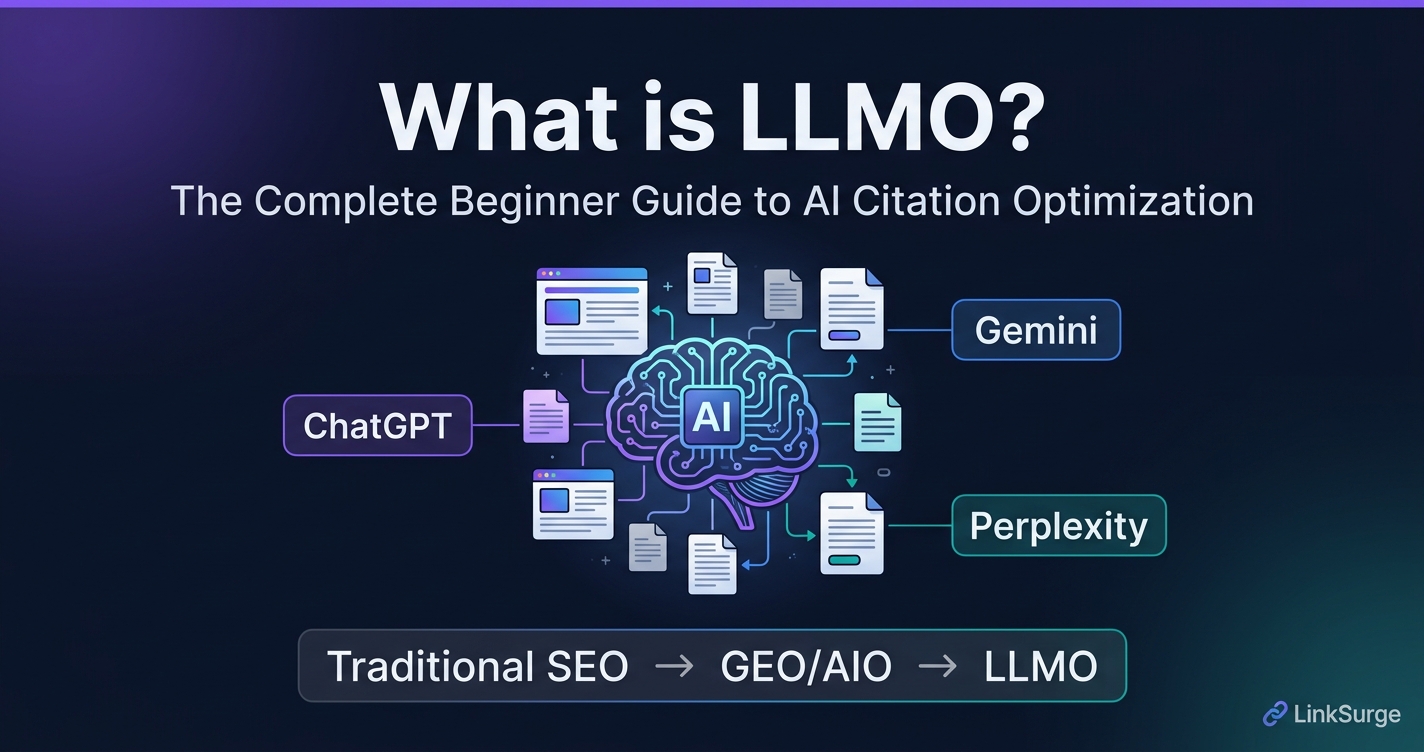

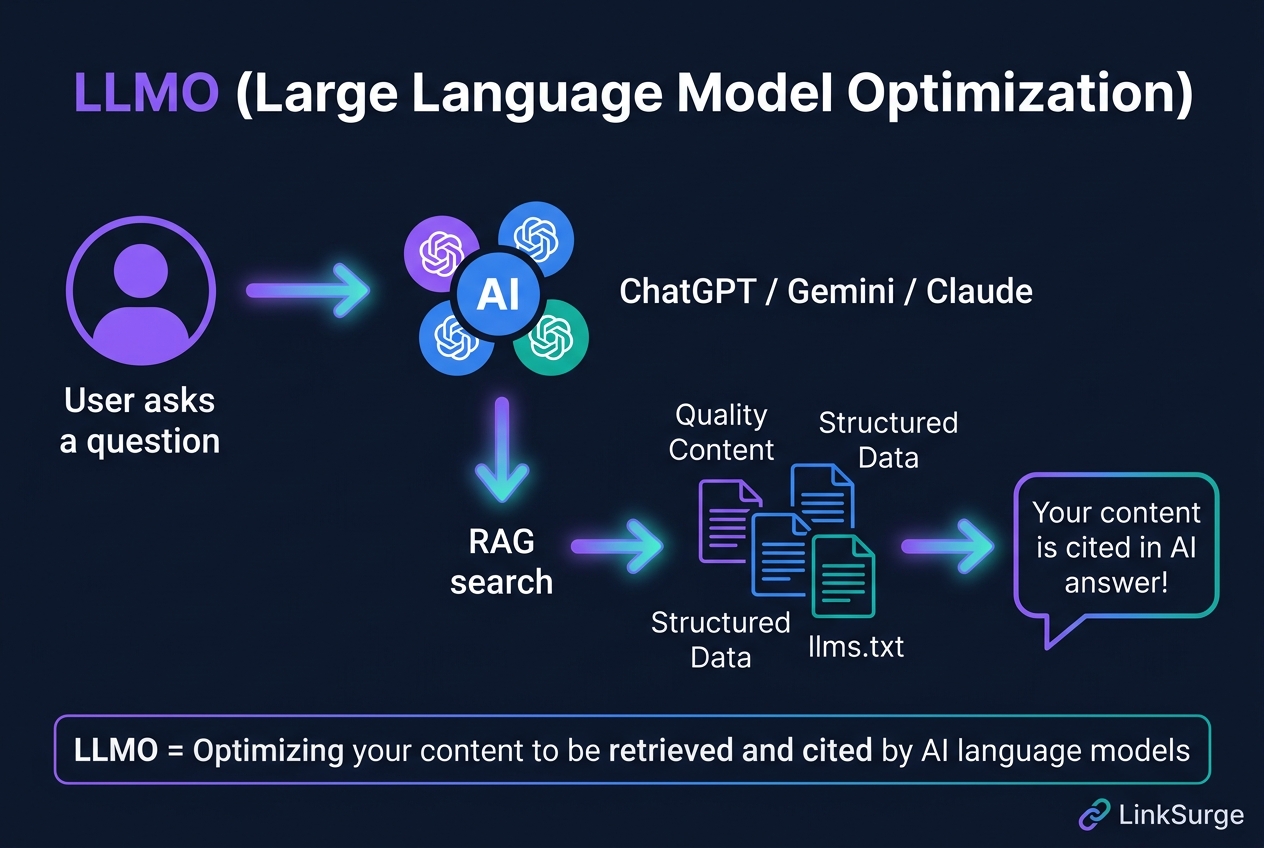

1. LLMO means "optimization for AI citation" — Getting ChatGPT, Gemini, and other AI systems to retrieve and cite your content when generating answers. That's the core goal.

2. Different from SEO, but complementary — Traditional SEO targets search rankings and clicks. LLMO targets citation and brand mention inside AI-generated answers. You don't have to choose — they work together.

3. RAG is the mechanism you need to understand — AI systems don't generate everything from memory. They search and retrieve relevant web content in real time. Optimizing for this retrieval process is the heart of LLMO.

4. You can start today without big investment — FAQ-formatted content, structured data (JSON-LD), and a clear author/entity footprint are the first steps — all achievable at low or zero cost.

5. Measure with AI citation metrics, not just rankings — AI-referred visitors tend to have higher conversion rates than traditional organic search visitors, according to emerging research. New metrics matter.

Here's a deeper look at each point.

1. What Is LLMO? A Simple Definition

LLMO (Large Language Model Optimization) is the practice of optimizing your content, website structure, and digital footprint so that AI systems — ChatGPT, Google Gemini, Anthropic Claude, Perplexity, and others — retrieve, reference, and cite your content when answering user queries.

Honestly, when I first heard the term, I assumed it was just another rebrand of SEO. It isn't. Here's the difference: with traditional SEO, success means your page appears in Google's results and a user chooses to click. With LLMO, success means an AI reads your content and includes it in its answer — sometimes before the user ever sees a search result page.

What LLMO is trying to achieve

The goal is simple: when someone asks ChatGPT "What's the best tool for tracking AI citations?" you want your brand or content to appear in the AI's answer. That's AI citation — and it's becoming a meaningful discovery channel.

What struck me while researching this is that AI-referred traffic, while smaller in volume than organic search, tends to arrive with higher purchase intent. The user has already received an AI-curated recommendation; they're clicking through to learn more or buy.

References: LLMO - Large Language Model Optimization - Effective World What Is LLMO? Optimize Content for AI & Large Language Models - Search Engine Land

2. LLMO vs. SEO vs. GEO vs. AIO: What's the Difference?

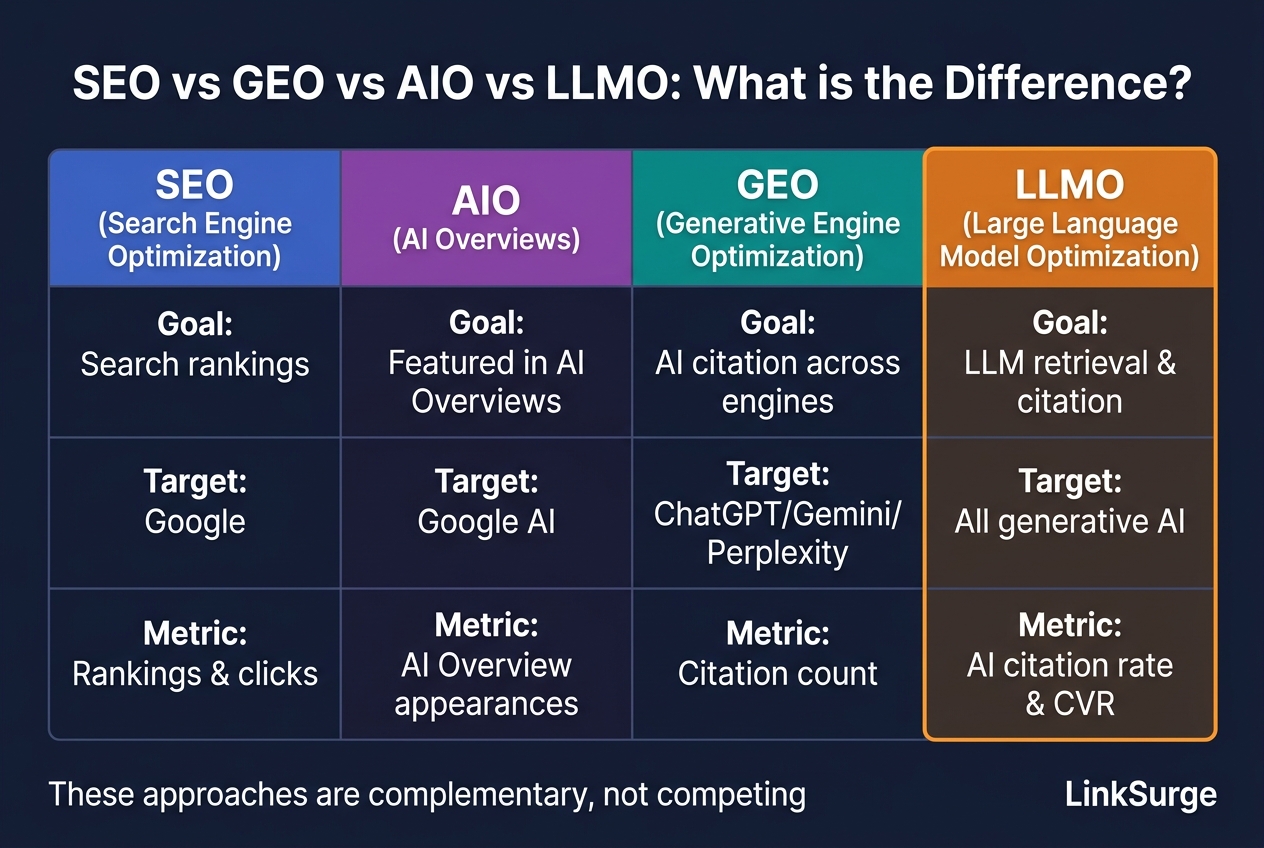

The alphabet soup of optimization acronyms is genuinely confusing. Let me sort it out.

The four concepts explained

SEO (Search Engine Optimization) is the original. Rank higher in Google, Yahoo, or Bing, get more clicks. On-page optimization, link building, technical SEO — these are the tools. Still the dominant channel for most businesses.

AIO (AI Overviews Optimization) targets the AI-generated summary that appears at the top of Google search results. Getting your content cited there means appearing before any organic result. It's Google-specific.

GEO (Generative Engine Optimization) is the broader English-language term for optimizing across multiple AI engines — ChatGPT, Gemini, Perplexity, Claude. Not limited to any single platform.

LLMO is essentially synonymous with GEO, with slightly more emphasis on the underlying language model layer. In practice, the tactics are the same: structured content, entity clarity, technical accessibility, and E-E-A-T signals.

Here's the thing: these aren't competing strategies. A piece of content that's well-structured, authoritative, and clearly written will perform well across all four dimensions simultaneously. Think of LLMO not as "instead of SEO" but as "SEO plus AI discoverability."

References: Large Language Model Optimization (LLMO) explained - Evergreen Media LLM Optimization vs Traditional SEO - SEONos

For a deeper dive into GEO implementation strategies, see our Complete Guide to Generative Engine Optimization (GEO).

3. How AI Retrieves Your Content: RAG Explained

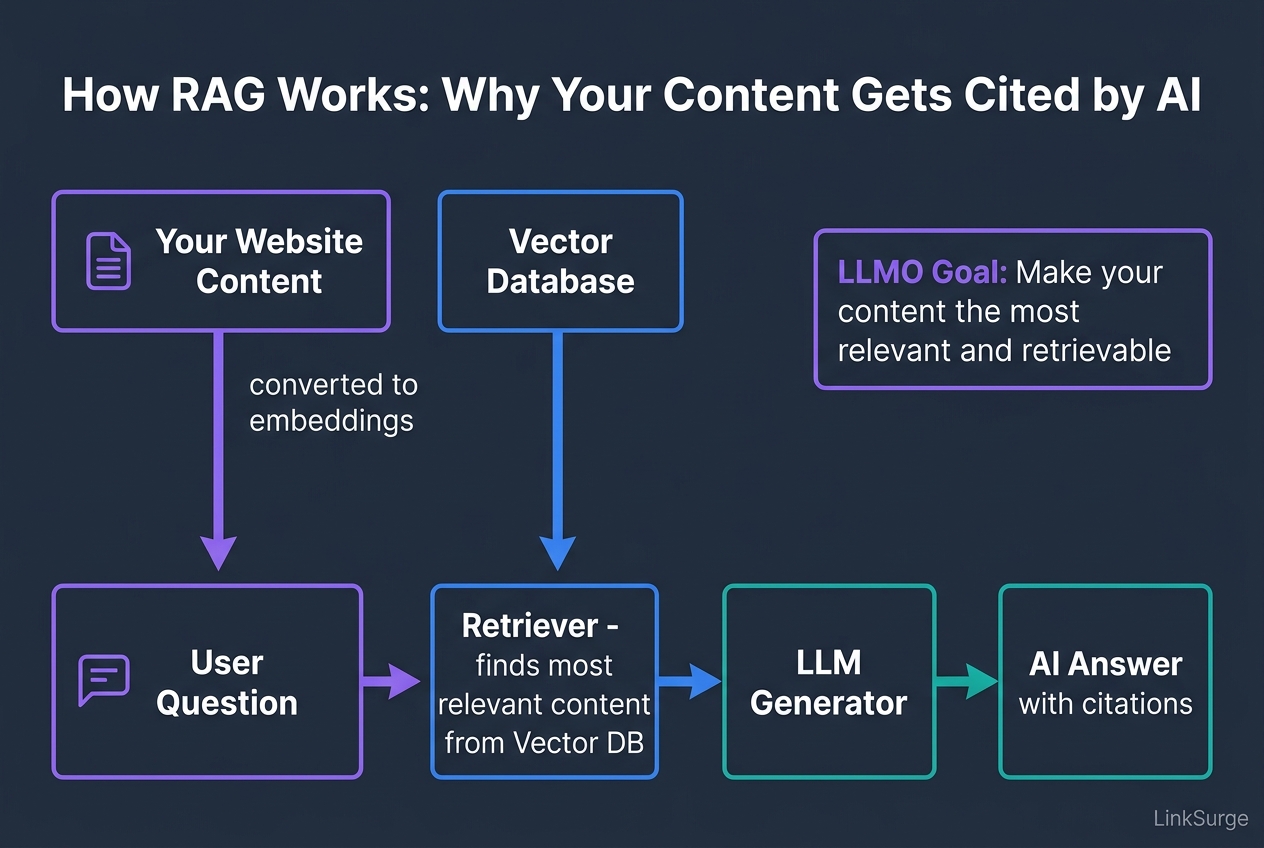

"Why does the AI cite one website and not another?" This is the question that unlocks everything. The answer is RAG — Retrieval-Augmented Generation.

RAG in plain English

Most AI chat systems don't just rely on their training data. They actively search for relevant content at query time and use it to generate grounded answers. Here's the simplified flow:

- User submits a question → "What tools help with AI citation tracking?"

- AI runs a retrieval query → Searches for relevant documents using vector similarity search

- Retrieved documents are injected into the prompt → "Based on these sources, answer the question"

- LLM generates a grounded response → Including citations to the retrieved sources

Your LLMO goal is to win steps 2 and 3. Make your content discoverable by the retrieval layer and trustworthy enough to be injected into the generation prompt.

What makes content "RAG-friendly"?

- Atomic answers: Self-contained paragraphs that answer one question completely (40–60 words each)

- Semantic clarity: Use the exact terms your target audience uses in questions

- Structured signals: FAQ markup, Article schema — signals that tell the retriever what each piece of content is about

- Freshness: Recently updated content is preferred by retrieval systems

References: Retrieval Augmented Generation (RAG) for LLMs - PromptingGuide What is RAG (Retrieval-Augmented Generation)? - OpenAPI

LinkSurge

linksurge.jp

SEO・AIO・GEO統合分析プラットフォーム。AI Overviews分析、SEO順位計測、GEO引用最適化など、生成AI時代のブランド露出を最大化するための分析ツールを提供しています。

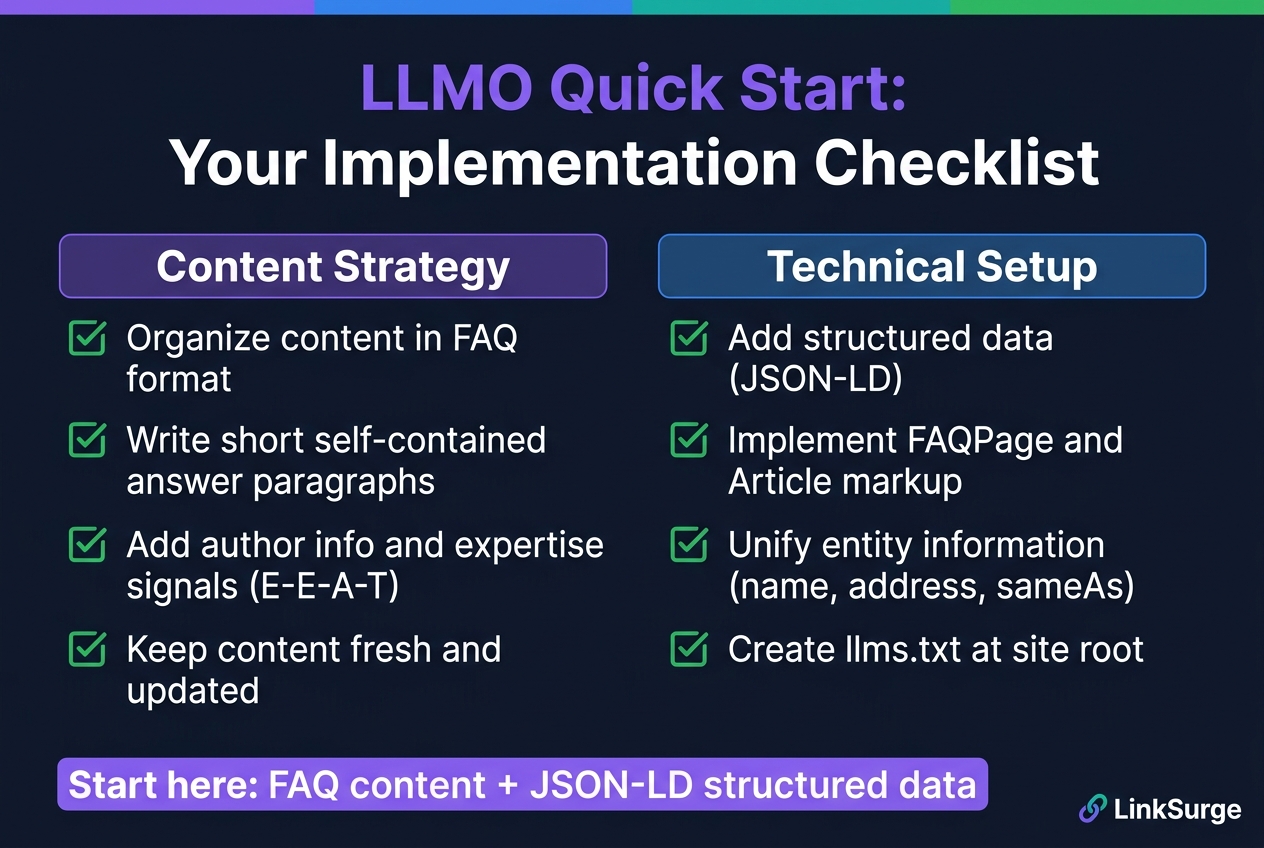

4. LLMO Implementation Checklist: Where to Start

Based on the research and my own testing, here's a prioritized checklist for getting started with LLMO — from free, quick wins to deeper investments.

Content strategy (start here)

1. Reformat key pages with FAQ structure

Explicit Q&A formatting helps AI systems identify and extract precise answers. "Q: What is LLMO? A: LLMO is..." is exactly the kind of extractable answer block that gets cited.

2. Write self-contained "answer paragraphs"

Each paragraph in your content should answer a specific question completely — without needing surrounding context. Aim for 40–60 words per answer block. This is the single highest-leverage LLMO tactic I've found.

3. Strengthen E-E-A-T signals

Include author names, credentials, publish dates, and update dates. For regulated industries (health, finance, legal), this is critical. AI systems weight authoritative sources more heavily.

4. Update content regularly

AI retrieval systems favor fresher content. Even minor updates that add new data, case studies, or statistics can improve retrieval frequency.

Technical setup (next steps)

5. Implement structured data (JSON-LD)

Add FAQPage, Article, Organization, and relevant schema types using JSON-LD. This helps AI systems understand what each page is about and which entities it discusses.

6. Unify your entity footprint

Make sure your organization name, key product names, and author identities are consistent across all pages. Use sameAs to link to authoritative profiles (LinkedIn, Wikipedia, official registries).

7. Create an llms.txt file

An llms.txt file at your site root tells AI crawlers about your site's key pages and content — similar to robots.txt but for AI systems. It's low effort and potentially useful, though its direct impact on citation frequency hasn't been proven yet as of early 2026.

Caveat: As of February 2026, there's no peer-reviewed evidence that having an

llms.txtdirectly increases AI citation frequency. Treat it as a forward-looking investment, not a guaranteed quick win.

You can monitor how AI engines currently perceive your site using LinkSurge's GEO analytics tools — track citation counts across ChatGPT, Gemini, and Perplexity in one dashboard.

References: Answer Engine Optimization (AEO): The 2026 Guide - LLMRefs What Is LLM Optimization? Key Benefits & Definitions - Conductor

For context on how AI is reshaping search behavior broadly, see our guide on How AI Search Is Changing SEO in 2026.

5. Measuring LLMO: New Metrics for a New Era

Traditional SEO is measured in rankings, impressions, and clicks. LLMO needs different metrics.

The core LLMO KPIs

AI citation frequency

How often does your domain appear in AI-generated responses? Manual testing (ask ChatGPT, Gemini, and Perplexity relevant questions and note whether your site is cited) is a start. Automated monitoring tools are emerging.

AI-referred traffic

Track referral traffic from chatgpt.com, perplexity.ai, bard.google.com, and similar domains in your analytics. This is currently small but growing.

AI citation share

What percentage of AI answers in your topic area include your domain? This "share of voice" metric is the most meaningful for competitive positioning.

AI-to-conversion rate

Segment your analytics by AI referrer and measure conversion rates separately. Early evidence suggests AI-referred visitors convert at higher rates than average organic visitors — they arrive pre-qualified by AI recommendation.

Frequently Asked Questions

Is LLMO the same as GEO?

For most practical purposes, yes. GEO (Generative Engine Optimization) is the dominant English-language term; LLMO is more common in Japanese markets. GEO slightly emphasizes multi-engine optimization; LLMO emphasizes the underlying language model layer. The implementation tactics are essentially identical.

Should I stop doing SEO and switch to LLMO?

No. SEO still drives the majority of web traffic and isn't going anywhere. LLMO is an additive strategy. Here's the good news: most LLMO best practices (quality content, structured data, E-E-A-T) also improve traditional SEO. You're not choosing between them.

Does llms.txt actually work?

The honest answer is: we don't know yet. It's technically straightforward to implement and doesn't hurt anything. But as of early 2026, there's no published evidence that having an llms.txt file measurably increases AI citation rates. Prioritize content quality and structured data over llms.txt.

How long does it take to see results?

AI training data isn't updated in real-time — so changes to your content may take weeks to months to be reflected in model behavior. However, for AI systems that use real-time web retrieval (like Perplexity), you may see faster results. Build LLMO as a sustained practice, not a one-time sprint.

What does LLMO cost?

The core tactics — FAQ formatting, structured data, entity consistency — cost nothing beyond the time to implement them. The bigger investments are AI citation monitoring tools and, if needed, RAG infrastructure for enterprise use cases. Start free, scale as needed.

Conclusion: LLMO Is the Next Chapter of Content Strategy

The way people find information is changing. A meaningful and growing share of discovery now happens through AI-generated answers, not search result pages. LLMO is the practice of making sure your content is part of those answers.

What I find encouraging about LLMO is that it doesn't require abandoning what works. Good content — clear, authoritative, well-structured, regularly updated — is good for SEO and good for LLMO. The principles converge.

Start with two things: reformat your most important pages as FAQ content, and add JSON-LD structured data. Those two moves, which cost nothing, will measurably improve your chances of being cited by AI systems.

LinkSurge's GEO dashboard and LLM mentions tracking give you the data to see where you stand today — and measure your progress as you implement LLMO strategies. Give it a try.