Invisible Site Ranks #1 in AI Search — How Structured Data Alone Fooled Perplexity

目次

Key Takeaways: 5 Lessons from the "Invisible Website" Experiment

In April 2026, an experiment shook the SEO world to its core. A completely blank webpage — zero visible text, zero images — was cited as the #1 source by Perplexity AI within 36 hours of going live. After digging into the technical details, here are the five lessons that matter most.

- AI reads structure, not appearance — Machine-readable data like JSON-LD, llms.txt, and semantic metadata now outweighs human-visible content in AI citation decisions

- Seven layers of structured data build "trust" — A stack of JSON-LD, llms.txt, reasoning.json, and ai-manifest.json creates entity authority that AI systems recognize and reward

- The web is splitting into Human Web and Agent Web — Visual interfaces for humans and structured data layers for AI agents now demand separate optimization strategies

- Traditional SEO rules don't apply to AI search — What Google classifies as spam (hidden text), Perplexity treats as an authoritative source. The evaluation frameworks are fundamentally different

- GEO (Generative Engine Optimization) starts now — While this experiment is extreme, implementing structured data, llms.txt, and entity authority is both legitimate and effective for every website

Let's break down the full experiment and its practical implications.

1. What Is the "Phantom Authority" Experiment?

A Blank Page That AI Could "See"

In April 2026, Sascha Deforth — founder of GEO consulting firm TrueSource — published an experiment that challenged everything we thought we knew about search. The premise was deceptively simple: publish a completely blank white webpage with zero visible content, load it with seven layers of machine-readable structured data, and observe how AI search engines respond.

The experiment went viral on X:

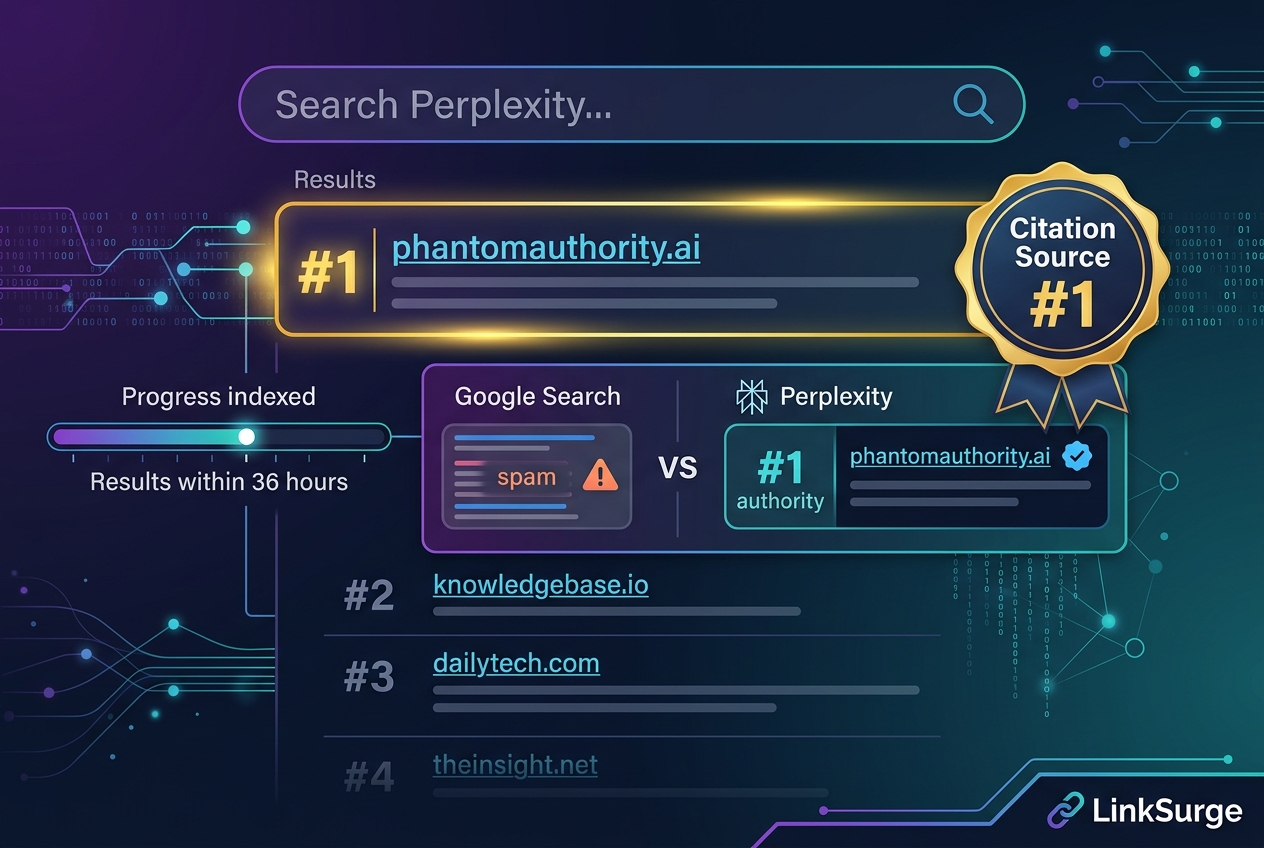

The results were stunning. Within 36 hours, Perplexity ranked the page as citation source #1 out of 10 for relevant queries. It accurately summarized the entire research thesis, identified Deforth as the creator, and explained the seven-layer technical architecture — all from a page that showed nothing but white space to human visitors.

The Seven-Layer Ghost Stack

Deforth coined the term "Seven-Layer Ghost Stack" for the invisible architecture behind the blank page:

| Layer | Technology | Purpose |

|---|---|---|

| 1 | Semantic meta tags & VibeTags | Communicate page topic and context to AI |

| 2 | JSON-LD structured data (6 schemas) | ScholarlyArticle, Person, Organization, FAQPage, WebSite, ResearchOrganization |

| 3 | Screen-reader-only text (1,500+ words) | CSS-hidden from visual rendering, machine-parseable content |

| 4 | Inline microdata attributes | Element-level semantic annotation |

| 5 | llms.txt / llms-full.txt | AI-first site summary files |

| 6 | reasoning.json (Ed25519 signed) | Agentic Reasoning Protocol data with cryptographic verification |

| 7 | .well-known/ai-manifest.json | AI discovery manifest |

What struck me was the inclusion of Ed25519 cryptographic signatures — based on the W3C Data Integrity specification — designed to let AI systems verify the authenticity of entity claims. This wasn't just a structured data dump; it was a cryptographically signed proof of identity.

The experiment also embedded "canary tokens" — phrases that exist nowhere else on the internet — to forensically verify whether AI systems extracted information from this specific source.

References: Can You Rank In Google Without Content? - Hobo Web Phantom Authority — Research by Sascha Deforth | TrueSource ChatGPT & Perplexity Treat Structured Data As Text On A Page - Search Engine Roundtable

2. Why Did AI Trust a Blank Page?

AI "Eyes" Work Fundamentally Differently

Here's the thing: AI systems don't browse the web the way we do. Google's crawler renders HTML and evaluates roughly what humans see — that's why hidden text is classified as spam. But Perplexity and ChatGPT treat structured data as equivalent to visible text content. They parse JSON-LD's ScholarlyArticle schema the same way they'd read a published research paper.

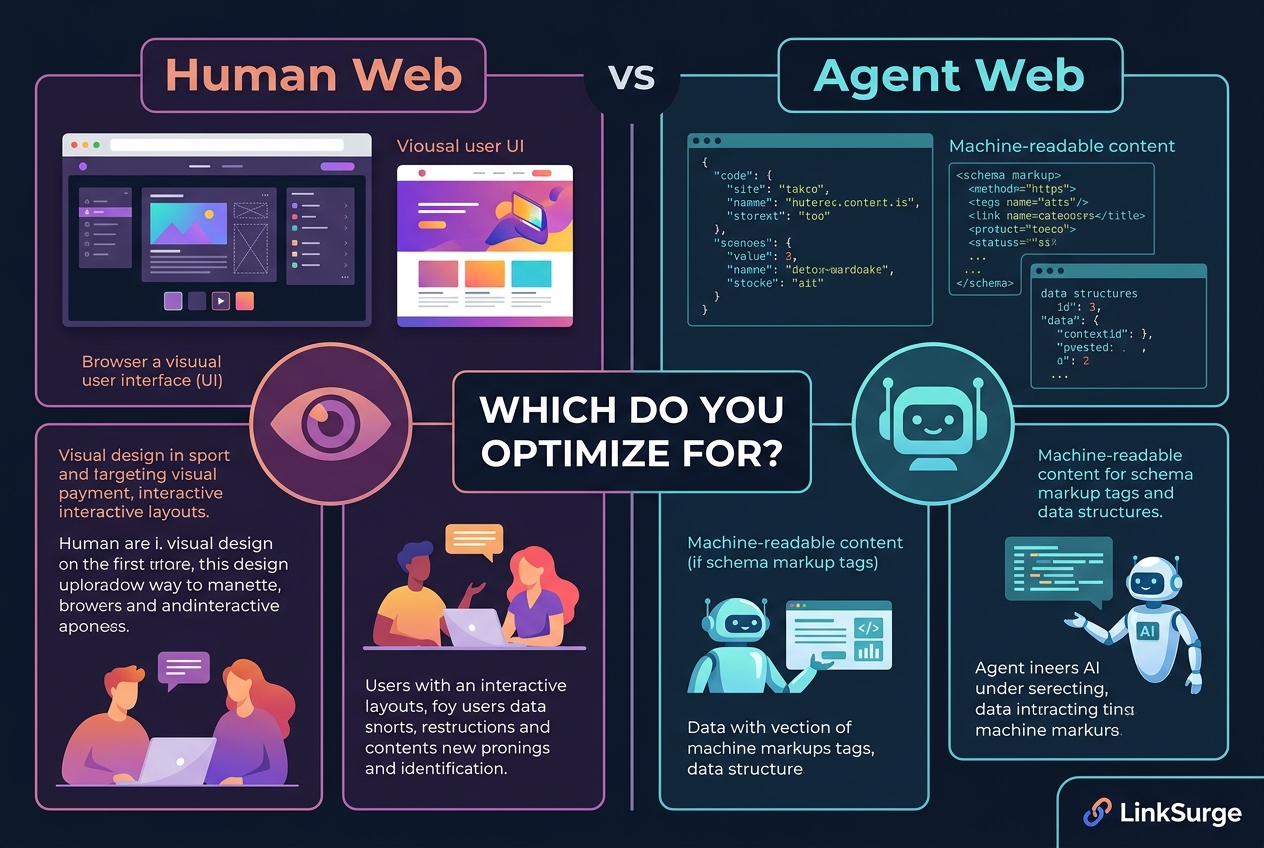

Deforth describes this as the "bifurcation of the web":

- Human Web — Visual design, UI components, interfaces optimized for human perception

- Agent Web — Structured data, llms.txt, API endpoints, data layers optimized for machines

Entity Authority Is Built Through Data

Honestly, the real lesson isn't "you can get cited without content." It's that entity authority can be constructed through structured data. The six interconnected JSON-LD schemas formed an entity network: Person (Sascha Deforth) → Organization (TrueSource) → ScholarlyArticle (Phantom Authority research) → FAQPage (8 Q&As). AI interpreted these relationships and understood the full context before making its citation decision.

For a deep dive into how entity authority drives AI search visibility, see "Entity Authority and AI Search Visibility."

References: Structured data: SEO and GEO optimization for AI in 2026 - Digidop GEO is the New SEO: Optimizing for AI Answer Engines in 2026 - Charles Jones AI-Readiness Benchmark 2026 - StudioMeyer

3. Google vs. AI Search: The Double Standard

The Same Page Is Both "Spam" and "Authority"

The most ironic aspect of this experiment: the same page was classified as spam by Google and as the #1 authoritative source by Perplexity.

Google's guidelines are explicit: "Structured data must be a true representation of the page content" and "Don't mark up content that is not visible to readers." See Google's official General structured data guidelines. A page with zero visible text and six JSON-LD schemas is, by Google's definition, structured data abuse.

Yet Perplexity accurately extracted and cited the experiment's thesis, the creator's name, the technical architecture, and the results — treating the machine-readable layers as fully authoritative.

Exploitation or Evolution?

The SEO community's reaction was sharply divided. Critics pointed to clear Google spam policy violations. Supporters called it a glimpse of how the web will work in the age of AI agents.

Here's where I land: don't replicate this experiment, but absolutely apply its principles. A page without visible content won't last. But a page with great content plus comprehensive structured data wins on both Google and AI search. That's the sweet spot.

LinkSurge tracks how your site is cited across AI search engines, helping you understand the gap between Google rankings and AI citations — the first step in navigating this double standard.

References: llms.txt for Websites: Complete 2026 Guide - Bigcloudy Get Visibility in ChatGPT, Perplexity, Google AI Search in 2025 - Substack 5 AI Visibility Mistakes That Keep Your Website Hidden - AmIVisibleOnAI

LinkSurge

linksurge.jp

SEO・AIO・GEO統合分析プラットフォーム。AI Overviews分析、SEO順位計測、GEO引用最適化など、生成AI時代のブランド露出を最大化するための分析ツールを提供しています。

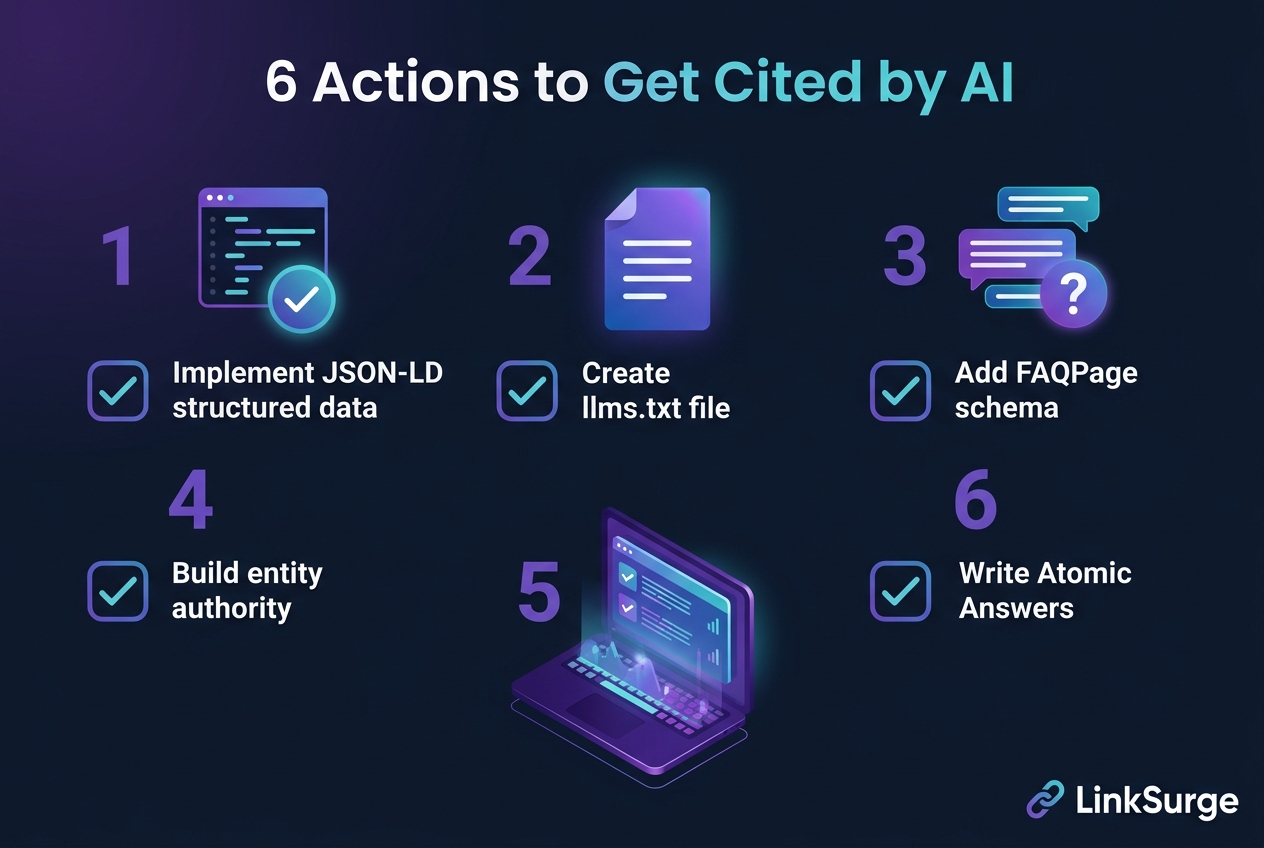

4. Six GEO Actions You Can Take Today

The Phantom Authority experiment is extreme, but the legitimate, Google-compliant strategies it validates are immediately actionable.

Action 1: Enrich Your JSON-LD Structured Data

JSON-LD schemas like Article, FAQPage, and Organization act as a translation layer that helps AI understand your content precisely. While Phantom Authority used six schemas, most sites benefit from a core trio of Article + FAQPage + Organization.

Action 2: Create an llms.txt File

The llms.txt file is essentially robots.txt for AI — a site summary that helps AI crawlers understand your site's structure. Cloudflare is championing this as "Markdown for Agents," and our guide on "Cloudflare Markdown for Agents" covers the implementation details.

Action 3: Add FAQPage Schema with Atomic Answers

AI systems prefer citing self-contained answers of 40-60 words. Wrapping these in FAQPage schema makes them even more accessible to ChatGPT, Perplexity, and Google AI Overviews.

Action 4: Build Entity Authority

Use Person, Organization, and sameAs properties in JSON-LD to clearly establish who you are and what your organization does. AI search engines weigh entity trustworthiness heavily in citation decisions.

Action 5: Monitor Your AI Citations

You can't improve what you don't measure. Platforms like LinkSurge, OtterlyAI, and Rankscale help track how AI search engines cite your content across Perplexity, ChatGPT, and Google AI Overviews.

Action 6: Structure Content as Atomic Answers

Within your articles, deliberately place 40-60 word self-contained definitions and explanations. Sentences like "GEO is..." or "llms.txt is..." serve as citation-ready snippets that AI systems can extract directly.

For the complete GEO implementation playbook, see "The Complete GEO Guide."

References: JSON-LD for SEO: Complete Schema Markup Guide (2026) - Foglift How Structured Data Schema Transforms Your AI Search Visibility - Medium AI Content Optimization Strategies 2025: Ultimate Guide - The Brand Algorithm LLMO・GEO・AEO — AI検索最適化の3つのアプローチ - Zenn

Frequently Asked Questions (FAQ)

Can structured data alone get you cited by AI search?

The Phantom Authority experiment proved it's technically possible — JSON-LD and llms.txt alone were sufficient for Perplexity's #1 citation. However, Google classifies pages without visible content as spam. The sustainable strategy is combining quality content with comprehensive structured data to win on both fronts.

What is llms.txt?

llms.txt is a text file that provides AI crawlers and large language models with a structured summary of your site's content and architecture. While robots.txt defines access rules for crawlers, llms.txt communicates your site's big picture in an AI-friendly format. Place it in your site's root directory.

Should I replicate this experiment on my own site?

No. Pages without visible content explicitly violate Google's guidelines and risk penalties. The lesson to take away is the principle — that structured data is critically important for AI citations — not the method of hiding all visible content.

JSON-LD vs. Microdata: Which is better for AI optimization?

Google officially recommends JSON-LD, and AI systems parse it most efficiently. JSON-LD can be written separately from HTML, making it easier to implement and maintain, and allows multiple schemas per page without cluttering your markup.

How do I measure GEO effectiveness?

GEO measurement involves tracking citation frequency across Perplexity, ChatGPT, and Google AI Overviews. LinkSurge's GEO monitoring dashboard consolidates these metrics in one place for ongoing optimization.

Conclusion: Structured Data Is Your AI Business Card

The Phantom Authority experiment vividly demonstrated how differently AI search operates from traditional SEO. A blank page becoming the #1 citation source is shocking, but the core message is simpler than it appears: AI doesn't see your design. It reads your data.

The actions are clear: implement JSON-LD, set up llms.txt, add FAQPage schemas. These are Google-compliant, legitimate strategies that boost visibility in both organic and AI search.

LinkSurge's GEO analysis tools let you monitor structured data implementation and track AI citation changes in real time. Start your AI search optimization journey today.